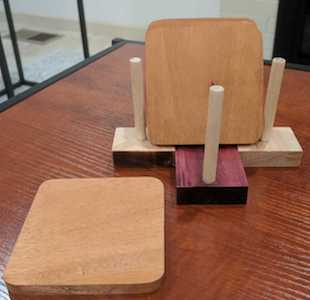

Cedar, Maple, Purpleheart Coasters

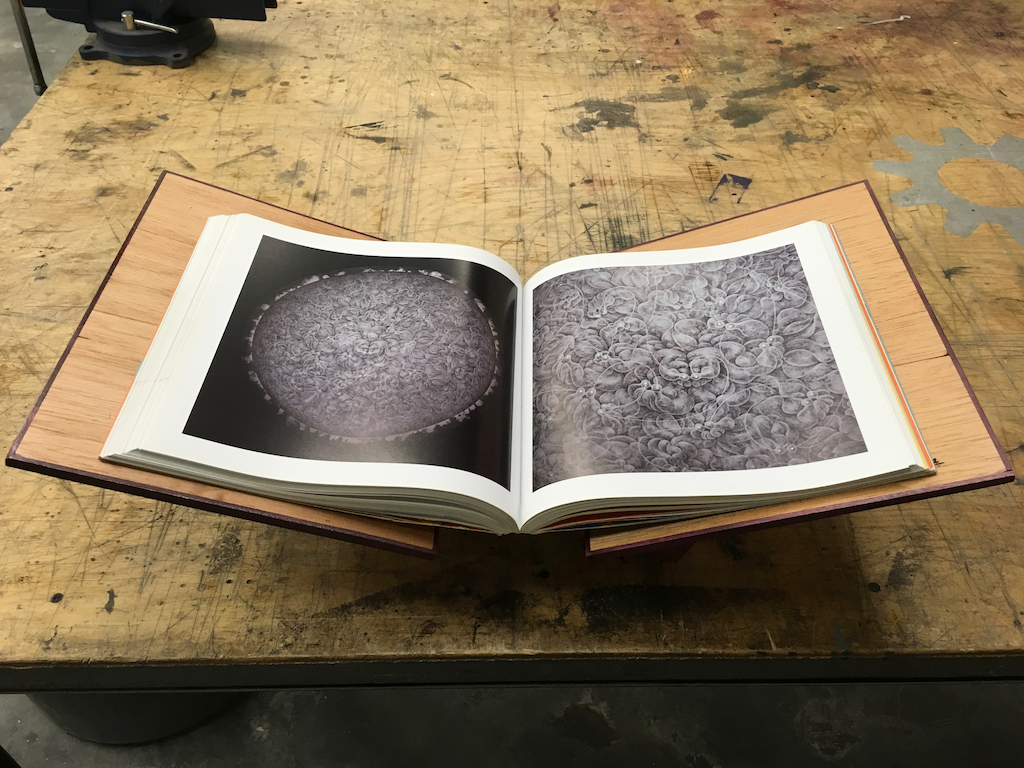

After my recent bookstand project, I was looking for a simple project to put left-over lumber to good use. These coasters are what I came up with.

A tree is the epitome of a selfless life - it provides shelter and sustenance for decades and it is sad to see any part of such a worthy being go to waste.

In this post I describe a quick project combining cedar, purpleheart and maple. The combination is aesthetically pleasing and can be put together in a few hours with simple hand tools.

I was building this project on a tight schedule and thus things don’t line up 100% - someone with better skills or more time should produce better output.